PanoDR: Spherical Panorama Diminished Reality for Indoor Scenes

Jun 25, 2021·, ,,·

1 min read

,,·

1 min read

Vasileios Gkitsas

Vladimiros Sterzentsenko

Nikolaos Zioulis

Georgios Albanis

Dimitrios Zarpalas

PanoDR

PanoDRAbstract

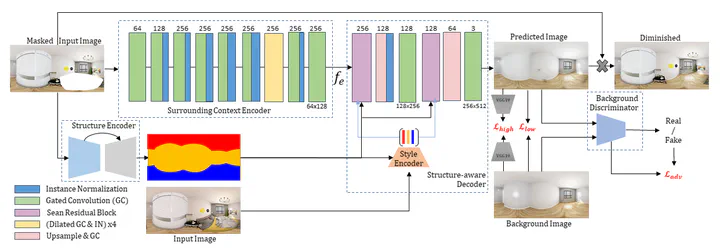

The rising availability of commercial 360 cameras that democratize indoor scanning, has increased the interest for novel applications, such as interior space re-design. Diminished Reality (DR) fulfills the requirement of such applications, to remove existing objects in the scene, essentially translating this to a counterfactual inpainting task. While recent advances in data-driven inpainting have shown significant progress in generating realistic samples, they are not constrained to produce results with reality mapped structures. To preserve the ‘reality’ in indoor (re-)planning applications, the scene’s structure preservation is crucial. To ensure structure-aware counterfactual inpainting, we propose a model that initially predicts the structure of a indoor scene and then uses it to guide the reconstruction of an empty – background only – representation of the same scene. We train and compare against other state-of-the-art methods on a version of the Structured3D dataset modified for DR, showing superior results in both quantitative metrics and qualitative results, but more interestingly, our approach exhibits a much faster convergence rate. Code and models are available at

.

Type

Publication

In 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW)

Click the Cite button above to copy/download publication metadata (*.bib).